“First to Serve” by Algis Budrys

“First to Serve” by Algis Budrys

from The Metal Smile, ed. Damon Knight

Belmont Science Fiction, 1968

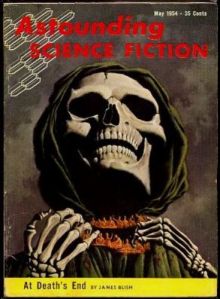

Originally published in Astounding Science Fiction, May 1954

Price I paid: none

“DO NOT FOLD, BEND, OR MUTILATE”

marked the beginning of our cybernetic society. How will it end?

The varied answers to that question have proved to be fertile ground for some of the greatest science fiction imaginations. But perhaps we shouldn’t look too closely into the future of cybernetics. It may be that the survival capacity of the thinking machine is greater than that of its maker…

I promise that it’s a coincidence. I did not intend to read a story about robot soldiers on the eve of Memorial Day. It just worked out that way. I’m not complaining, I just wanted you to know.

I say this is a story about robot soldiers, but I’m not accurate on that front. The story is about a singular robot soldier, and it never goes to war. The reason it never goes to war is the point of the story.

I have to admit, this is my first time reading Algis Budrys. He seems like he was a cool guy, and I have two copies of The Falling Torch—a novel that looks up my alley—somewhere around this house.

I also admit that I haven’t the foggiest idea how to pronounce the man’s name. In my head I’ve been pronouncing it a particular way, but it turns out the dude is from Lithuania and I don’t know where to start with that. I just looked up the Lithuanian language and it has stuff like pitch accents. Twelve noun declensions! Baltic languages are so far beyond my linguistic skills I can’t even stand it.

The story takes place from the point of view of the robot, named Pim. Pim is derivative of the robot’s official name, Prototype Mechanical Man I. I guess this is what you’d call an epistolary story, but it’s actually in the form of a diary so there’s probably a more specialized name for it that I can’t find. Furthermore, it’s a robot diary, which itself probably has a specialized sci-fi fandom name. If it doesn’t, I’m calling it right now. It’s called a robo-diaretical epistolaritation. Tell your friends.

Pim’s inventor is a fella named Victor Heywood, whom everyone refers to by surname. This makes sense, because Heywood works for the military. It’s never stated which particular military he works for, which is fine because it doesn’t matter. It also doesn’t matter that the story takes place in 1974, but we get that information anyway. I guess that’s part of the diarist format that is unavoidable, especially with robot diarists. You know what I’m talking about.

Because Pim is the point-of-view character, a lot of the story has him seeing things he doesn’t understand followed by asking Heywood what’s going on. The story has a real “turn to the camera and explain” feel that I wasn’t super keen on, but since it’s a short story I think it worked out okay enough. If it had been a whole novella, I would have screamed. A novel might well have been thrown away.

I was almost ready to say that robots aren’t the point of the story at all and that it has more to do with the military in general, but that’s not accurate either. Still, a large part of the story is, in fact, critical of the military.

Heywood works for a military division called COMASAMPS, which stands for something. His job is to come up with an effective military robot. He has done so, and the problem is that he’s done his job too well. At least that’s what he’s afraid of. As the story progresses, we learn that he didn’t so much do too well as he did just well enough, and that just well enough is the dangerous thing.

The story deals with the fact that Heywood and his robot division are constantly being spied on by the secret intelligence forces of his country’s other branches of the military. I think he works for the army, and so the navy and air force and so forth are keeping tabs to make sure that the army’s not getting a leg-up on them. The word “funding” was never used, but I imagine that’s a large part of the reason for all this intranational espionage.

This reminds me of being very young—four or five or so—and thinking that that’s what war was. A never-ending conflict between the army and the navy and the air force and the marines or whatever. They all just fought each other, all the time. Why would they do that? I dunno. I was a kid.

But tell me this, dear reader: Is the reality any less stupid?

One particular spy, a guy named Ligget, works for the CIC. I assume that’s meant to refer to the Counter-Intelligence Corps, but there are a lot of possibilities, my favorite of which is Citizenship and Immigration Canada. Anyhoozle, Ligget is investigating why Heywood hasn’t made any progress in his robot soldier project. He also thinks that Heywood is purposefully sabotaging the project, so he confronts him.

Thus follows the largely expository part of the story where we learn a lot of what the deal is. Some parts of it were repeats from previously in the story when Heywood would explain things directly to the robot. This is the meat of the story, at least on the robot angle, and it’s a pretty fascinating look at what kinds of concerns a person in 1954 would have when it comes to AI and robotics.

Heywood’s experimentation with Pim has revealed that the only way to create a robot soldier worth the effort, time, and money is to create it as a fully autonomous unit. This unit should be capable of assessing information, making decisions, implementing those decisions, and generally progressing with as little human input as possible. Furthermore, because it’s a robot and all this is a given, it will be smarter, stronger, faster, and more powerful than a human soldier. Again, that part needn’t be questioned. It is a robot.

Heywood tried other setups. A remotely-controlled robot with human input is too slow to be effective. This is probably the issue that seems most awkward in hindsight. Budrys was in the 50s and the only way he could imagine communicating with the robot would be via…what? Magnetic tape? Long wires? Assembly language tapped out in Morse Code?

Telecommunications have come a long way in 60+ years, so this first problem seems to be the one that reality ran with most successfully. This is largely because we decided that building some kind of man-bot wasn’t the best option either. Drone strikes are problematic in a whole lot of ways, but we can’t deny that they work, for both of those reasons.

One of Heywood’s other options was the ability for the robot to accept orders, but not interpret them or modify them. The problem with this setup is largely the human element. The person giving the orders would need to think hard about every possible contingency, which of course would be impossible, so when the robot runs into something that wasn’t expected, it just craps the bed. Or worse, what if the programmer tells the robot to destroy a bunker, for instance, but then forgets to tell it to come home afterward? Or he remembers, but home moves because the enemy took over the base? No bueno.

Ligget catches on quickly, and tells us what the problem is. Again, the problem is an issue with the military mindset, and not one of overarching ethics. Heywood’s solution was to create a robot that was better than humanity in every way. This won’t do: what if the robot decides to take over the war? What then? Oh no, that can’t stand.

There’s the further problem of the robot(s) taking over humanity, but that’s not the point that the story is trying to make. I’m not sure if this was spelled out, but my interpretation was that the top military brass would never ever stand for having their authority taken away by anything, even—especially!—something better at war than they are.

Ligget also sees this as an existential problem, at least. After the lecture, he rants and raves for a while. While he doesn’t say it, I interpreted some of his rant as saying that humanity will find anything better than it, pull it down to its level, and kick it until it dies. This gave me a chuckle.

The story isn’t super clear on what happens at this point, but apparently Ligget tries to destroy the lab and fails because Pim is loyal enough to Heywood to defend him and his stuff. Perhaps there was some self-preservation stuff there, too, which explains the ending of the story. I guess it wouldn’t be possible to destroy Pim because he’s so rad, so the military encases him in concrete and drops him into a river. I wonder why a river and not, say, the Mariana Trench? I guess in case humanity ever changes its mind? And can’t build another robot?

I have a lot of questions about this story, but they have very little to do with its point, which I found intriguing. I’m not sure how much of it is applicable to these modern times where we have AIs generating hilarious Twitter feeds and Bibles while also turning on the bedroom lights, telling me which is the sixth Beatles album (and then playing it), and reminding me to do laundry until I stop ignoring it.

Is it a scarily prophetic story? Nope. Is it a fascinating window into the techno-ethical concerns of 1954? Yeah, largely. Is it a look at the military officer mindset that is relevant to all times of history? Well, I don’t have any direct experience with that, but I’m gonna go with yep.

If I’m disappointed with anything, it’s that the story never once considers the merits of a military robot as a way of preventing human misery. Never once does anyone say “This robot will keep so many of our soldiers alive!” or anything like that. But maybe that’s a problem with me being too idealistic? I mean, war is war, and people are gonna die one way or the other. Robot soldiers are still gonna attack enemy human lives, and those human lives will retaliate against our human lives whether they have robots or not. I guess no war will ever just be robots vs. robots with all the humans safe and sound. And even if that were to happen in some fashion, those robots would still be diverting resources from things like food production, hospitals, and other necessities of human life. After all, that’s the whole point of war. It’s not about killing soldiers. It’s about making things inconvenient enough for the other side that they stop trying to kill your side before they do the same thing to your side.

Oh look, here I am, the day before Memorial Day, talking about how war is bad. Reeeaaaaal controversial opinion there, Thomas.

Anyway, support the troops: Bring them back home.

“A remotely-controlled robot with human input is too slow to be effective.” Still a problem with Mars rovers.

What’s with the skull and the guitarist? Did I miss something?

LikeLiked by 2 people

The skull is from the issue that the story came out of. The guitarist is Meat Loaf, ’cause two out of three ain’t bad.

LikeLiked by 2 people

I suspected the former. The latter . . . Heh.

LikeLiked by 2 people

Does this remind anyone else of Zelazny’s classic “Home is the Hangman”? Because it feels similar to me (right down to the issue of remote controlled robots being too slow). And this story was 8 years BEFORE Hangman.

LikeLiked by 1 person

Robots could, theoretically, have been controlled by radio signals, something that both sides experimented with in aircraft during World War Two. And I think the issue of saving lives is somewhat addressed, as this is partly why the robot project exists in the first place. We also have our own factory robots that are now potential threats to the meat bags.

Also, Heywood had a partner, Russell, who goes crazy because of all the paranoia involving the spies and the military’s inability to accept their work. Liggit is his very unpleasant replacement.

LikeLiked by 1 person